Turning Data into Value

We build petabyte-scale data systems used by organizations in over 60 countries worldwide, from the Americas, over Europe, to Asia — powered by a multidisciplinary team, with PhDs from quantitative finance, over astrophysics, to digital market strategies.

Industries We Serve

Trusted across industries, serving customers in over 60 countries worldwide.

Rating Agencies

Evaluate and manage financial risks with large-scale data analysis and streamline your due diligence processes.

Law Firms

Keep track of administrative and legal proceedings, scan and assess changes in contracts, and screen financial statements.

Financial Advisors

Provide your clients with high-quality financial information to make the most informed investment decisions.

Regulatory Authorities

Reduce fraud with automated tracing and analysis of suspicious changes in financial reports and offerings.

Merger Advisory

Automate due diligence and keep track of deal activity in real-time with data-driven insights.

Equity Research

Monitor trading and institutional investment activities and uncover significant material contracts and agreements.

Executive Recruiting

Know when executive positions become vacant, gain insights into compensation structures, and research employment agreements.

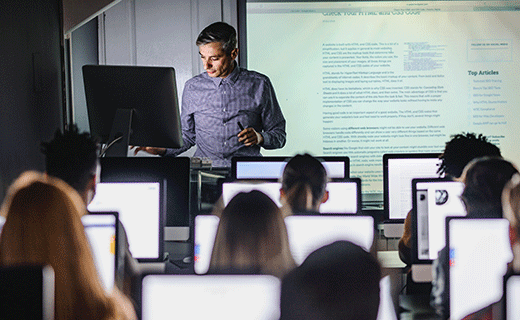

Education

Teach students how to generate insights from financial data or author new research papers about quantitative analysis.

Stock Brokers

Give your clients access to comprehensive data and create the edge they need to succeed in financial markets.

Stock Exchanges

Manage your compliance reporting processes and submit regulatory information to authorities with ease.

Investor Relations

Build cost-effective data streams for your publicly listed clients to comply with exchange rules.

Digital Services

Leverage large-scale datasets to enrich your data and generate new insights for your users.

What We Do

Headquartered in Munich, Germany, home to a multidisciplinary team, with PhDs from quantitative finance, over astrophysics, to digital market strategies. Our products handle over 1 billion requests per month, serving terabytes of data daily to investment banks, hedge funds, brokers, security exchanges, auditors, law firms, universities, and more.

Machine Learning & AI

#We develop and deploy machine learning systems for financial data analysis, regulatory document processing, and domain-specific AI applications. Current work includes petabyte-scale NLP pipelines for SEC filing analysis, fine-tuned LLMs for financial text understanding, and production RAG systems powering real-time research tools.

Machine Learning & AI

#We develop and deploy machine learning systems for financial data analysis, regulatory document processing, and domain-specific AI applications. Current work includes petabyte-scale NLP pipelines for SEC filing analysis, fine-tuned LLMs for financial text understanding, and production RAG systems powering real-time research tools.

Natural Language Processing

NLP pipelines for text classification, named entity recognition, sentiment analysis, and structured information extraction from unstructured documents at petabyte scale. Applications span regulatory filings (SEC 10-K, debt covenants, credit agreements), conference talks, audio-only interviews, earnings transcripts, legal documents, and multilingual web content.

Supervised Learning

Regression and classification models across tabular, time-series, and text data using XGBoost, LightGBM, CatBoost, deep neural networks, and ensemble methods. Automated feature engineering, systematic hyperparameter search, and rigorous cross-validation.

Unsupervised Learning

Clustering (k-means, DBSCAN, hierarchical) for segmentation and regime detection. Dimensionality reduction (PCA, UMAP, t-SNE) for exploratory analysis. Anomaly detection for transaction data and financial metrics. Topic modelling (LDA, BERTopic) for document corpora.

LLM Fine-Tuning

Open-source models (LLaMA, Mistral, Qwen) fine-tuned on our own GPU infrastructure via LoRA/QLoRA, and proprietary models via OpenAI and Google Vertex AI. Supervised fine-tuning, RLHF for alignment, and instruction tuning for reliable multi-step prompt execution. Full control over model weights, inference latency, and data privacy on self-hosted infrastructure.

Retrieval-Augmented Generation

RAG pipelines handling document chunking, embedding generation, vector indexing, retrieval ranking, and reranking. Powers real-time decision-making frameworks and research assistants with source citation.

Model Evaluation & Monitoring

Stratified cross-validation, temporal validation, holdout testing, and production A/B testing. Continuous drift detection, distribution shift monitoring, and automated retraining triggers.

We have developed quantitative systems across systematic investing, algorithmic trading, and risk management. Projects include multi-factor strategy platforms for US equities, fully automated trading infrastructure with real-time execution, and backtesting engines processing billions of Monte Carlo simulations.

We have developed quantitative systems across systematic investing, algorithmic trading, and risk management. Projects include multi-factor strategy platforms for US equities, fully automated trading infrastructure with real-time execution, and backtesting engines processing billions of Monte Carlo simulations.

Systematic Strategy Development

Built quantitative systems powering systematic investment strategies covering US equities across large-cap, mid-cap, and small-cap universes. Platforms integrated cross-sectional factor signals, fundamental data, and alternative data sources, supporting strategies from intraday rebalancing to monthly portfolio construction.

Algorithmic Trading Infrastructure

Developed trading infrastructure handling order routing, execution optimization, slippage minimization, real-time position management, and dynamic risk limit enforcement. Delivered performance reporting covering Sharpe, Sortino, max drawdown, win/loss ratio, profit factor, and more. Post-trade analytics covered execution quality, fill rates, and market impact.

Fundamental & Technical Analysis Systems

Built automated financial statement analysis pipelines processing thousands of securities: ratio analysis, DCF, residual income, and relative valuation models updated in real-time. Delivered technical analysis systems processing price/volume data with classical indicators (RSI, MACD, Bollinger) and configurable signal combinations.

Backtesting Engines

Developed backtesting platforms with realistic transaction cost modeling, market impact estimation, and survivorship-bias-free datasets. Implemented walk-forward analysis, out-of-sample testing, and Monte Carlo simulation for confidence interval estimation.

Monte Carlo Simulation

Built infrastructure for running billions of sample paths to stress-test strategies and estimate tail-risk distributions. Covered correlated multi-asset scenarios, regime-switching models, and fat-tailed distributions. Parallelized across hundreds of CPU cores.

Portfolio Construction & Risk Tools

Delivered portfolio optimization and risk management systems: factor model construction (value, momentum, quality, low volatility), covariance estimation, and constrained optimization. Supported long-only, long-short, and market-neutral configurations with turnover, sector, and position-size constraints.

Infrastructure & DevOps

#We operate a hybrid infrastructure spanning cloud providers, dedicated datacenters, and self-hosted GPU clusters. This includes multi-region deployments across hundreds of servers, a self-hosted inference platform on NVIDIA Blackwell GPUs, and petabyte-scale data lake architectures on AWS, Google Cloud, Hetzner and other providers.

Infrastructure & DevOps

#We operate a hybrid infrastructure spanning cloud providers, dedicated datacenters, and self-hosted GPU clusters. This includes multi-region deployments across hundreds of servers, a self-hosted inference platform on NVIDIA Blackwell GPUs, and petabyte-scale data lake architectures on AWS, Google Cloud, Hetzner and other providers.

Cloud & Hybrid Architecture

Distributed systems on AWS and Google Cloud, and cost-efficient workloads on Hetzner and similar providers. Self-hosted bare-metal in dedicated datacenters for data sovereignty and sustained compute. All provisioned via Terraform and infrastructure as code.

GPU Inference

Fine-tuned models served on our own NVIDIA Blackwell GPUs with terabytes of RAM and hundreds of CPU cores. High-throughput, low-latency inference without third-party API dependencies. Automatic scaling based on request volume.

Multi-Region & CDN

Hundreds of servers across multiple geographical regions. Cross-region replication, automated failover, and geographic redundancy. Global data delivery via CDN edge caching with origin shielding and load distribution.

DevOps

CI/CD with blue-green and canary deployments for zero-downtime releases. Monitoring and alerting across system health, application performance, and user-facing metrics. Container image scanning, automated patching, and incident response runbooks.

Data Lakes & Object Storage

Data lake architectures on S3-compatible object storage organized into raw, processed, and curated layers. Partitioning and cataloging for efficient petabyte-scale querying. Lifecycle policies, encryption at rest, and audit logging.

Data Engineering

#We run data pipelines processing terabytes of data daily from hundreds of sources. This includes real-time data streaming via Kafka, large-scale web extraction systems, and data normalization pipelines serving financial institutions across multiple technology stacks.

Data Engineering

#We run data pipelines processing terabytes of data daily from hundreds of sources. This includes real-time data streaming via Kafka, large-scale web extraction systems, and data normalization pipelines serving financial institutions across multiple technology stacks.

Extraction

Large-scale web data extraction systems, API ingestion workflows with schema evolution across hundreds of sources, and document parsing (PDF, HTML, proprietary formats).

Normalization

Automated pipelines transforming heterogeneous sources, such as audio, video, text and images into analysis-ready formats (Parquet, JSONL, Avro). Entity resolution, deduplication, unit conversion, and taxonomy mapping. Version-controlled, auditable transformation rules.

Database & Storage Systems

Redis, Kafka, MongoDB, PostgreSQL, pgvector, TimescaleDB, and many more. Vector databases for clustering, search, and embedding retrieval. Time-series databases for high-frequency financial data. In-memory stores for caching and real-time analysis. Document and relational stores selected by query pattern and consistency requirements.

Pipelines & Streaming

Batch and micro-batch ETL/ELT pipelines with scheduling, dependency management, and error handling. Real-time streaming via Kafka and Redis Streams with exactly-once or at-least-once delivery. Sub-second propagation for market data feeds, event processing, and operational monitoring.

Data Quality

Automated checks at every pipeline stage: schema conformance, value ranges, null rates, referential integrity, distribution properties. Failed validations quarantine records. Quality metrics tracked over time to catch upstream degradation.

API Development

#We design and operate APIs serving over one billion requests per month to clients in 60+ countries. This includes high-throughput REST APIs for regulatory data, WebSocket streaming feeds for real-time events, and search engines querying 100+ million documents in sub-second response times.

API Development

#We design and operate APIs serving over one billion requests per month to clients in 60+ countries. This includes high-throughput REST APIs for regulatory data, WebSocket streaming feeds for real-time events, and search engines querying 100+ million documents in sub-second response times.

High-Throughput APIs

REST and GraphQL APIs handling billions of requests. Efficient serialization, connection pooling, and response caching. Gateway-level rate limiting, authentication (API keys, OAuth, JWT), and request validation.

Streaming APIs

WebSocket- and low-latency Layer-3-based streams for live data feeds of real-time events. Connection management with heartbeats, reconnection, and backpressure handling.

Search & Query Engines

Full-text search, faceted filtering, and relevance ranking over hundreds of millions of documents. Inverted indices, vector similarity search, and hybrid retrieval. Sub-second query response times.

Developer Experience

OpenAPI specifications, versioned APIs with backward compatibility, auto-generated SDKs, and sandbox environments. Per-endpoint monitoring of latency, error rates, and usage patterns.

Security & Compliance

#Security is applied across every layer of our stack, from network to application to data. All systems enforce encryption in transit and at rest, follow zero-trust networking principles, and maintain immutable audit trails.

Security & Compliance

#Security is applied across every layer of our stack, from network to application to data. All systems enforce encryption in transit and at rest, follow zero-trust networking principles, and maintain immutable audit trails.

Encryption

TLS 1.3 on all data in transit, including internal service-to-service communication. AES-256 encryption at rest across databases, object stores, backups, and logs. Automated key rotation via dedicated KMS.

Hardening & Network

Servers hardened to CIS benchmarks. Zero-trust network architecture with segmented VPCs, private subnets, WAF, and DDoS protection. Mutual TLS (mTLS) for internal services. Key-based SSH with bastion hosts and full audit logging.

Access & Audit

RBAC with least-privilege across all systems. Multi-factor authentication. Immutable access logs with regulatory-aligned retention and compliant data handling.

Provider Compliance

All our cloud providers are SOC 2 Type II and ISO 27001 certified, with regular independent audits. Infrastructure runs on hyperscaler platforms (AWS, Google Cloud) and established European providers (Hetzner), each subject to ongoing compliance verification and contractual security obligations.

Research & Collaboration

#We maintain active partnerships with leading universities and contribute to research in quantitative finance and investment strategies. This includes joint publications on financial market analysis, co-supervised doctoral research in finance and machine learning, and presentations at international conferences.

Research & Collaboration

#We maintain active partnerships with leading universities and contribute to research in quantitative finance and investment strategies. This includes joint publications on financial market analysis, co-supervised doctoral research in finance and machine learning, and presentations at international conferences.

Academic Partnerships

Ongoing collaboration with universities on applied research in quantitative finance, machine learning, and large-scale data systems. Co-supervision of doctoral and post-doctoral work. Research outcomes feed into production systems.

Quantitative Finance Research

Published research on quantitative investment strategies, regulatory data for automated trading systems, and market trends. Contributing to public discourse on data governance and disclosure standards.

Get in Touch

Interested in working with us? Reach out — we'd love to hear from you.

info@datatwovalue.comData2Value GmbH

Dingolfinger Str. 15, 81673 Munich, Germany

Managing Director: Dr. Jan Schroeder

Commercial Register: District Court Munich, HRB 267997

VAT ID: DE346656979